The Safe Harbor Trap

A Longwinded Critique of OpenAI’s Bid to Bypass California AI Safety Law

On August 11, 2025, Chris Lehane, Chief of Global Affairs for OpenAI and proclaimed modern-day “master of the political dark arts”,1 addressed a letter to Gov. Gavin Newsom regarding the state’s approach to AI safety legislation. The letter argues that compliance with emerging federal and international frameworks should grant developers a "safe harbor" from California's regulations. This proposal directly targets the core mechanisms of pending legislation like SB 53, which aims to establish transparency and safety requirements for frontier AI models.

This request, while framed in the language of "harmonization," is a sophisticated attempt at regulatory arbitrage. It is a strategic move to water down what is already a very light-touch approach, asking California to outsource its responsibility to protect its citizens by equating vastly different mechanisms (voluntary international codes and specialized federal agreements) with enforceable state law.

Furthermore, the context surrounding the letter has shifted dramatically. Last Friday, the Assembly Appropriations Committee significantly narrowed SB 53. Crucially, the amendments removed the mandatory third-party safety audit requirement, which, even in earlier versions, was not slated to take effect until 2030, and raised the threshold for "covered developers" from $100 million to $500 million. These changes are unfortunate but they also render several of OpenAI's arguments regarding regulatory burden obsolete, while making their central request for a safe harbor even more problematic.

I am going to try to examine Lehane’s arguments line-by-line while hopefully not dignifying them too much

1. The Core Ask: Harmonization vs. Substitution

The letter is framed around a seemingly reasonable request:

Subject: Recognition of International and Federal AI Safety Frameworks for State Law Compliance

P2: "Harmonizing state-based AI regulation with emerging global standards is a critical step... Recognizing compliance with established international and federal frameworks as sufficient for state law compliance is key to achieving this balance."

The core flaw in this argument is the conflation of "harmonization" with "substitution."

Harmonization involves aligning definitions and reducing duplicative paperwork while preserving independent enforcement authority.

Substitution (a safe harbor) means that compliance with Framework A automatically deems a company compliant with Framework B, effectively nullifying Framework B's independent authority.

Let’s be very clear. Lehane is asking for substitution. He asks California to accept external frameworks as a complete replacement for state-level public safety mandates. This strategy, pretending different regulatory mechanisms are the same, fundamentally undermines the state's ability to set and enforce its own safety standards.

2. The Missing Federal Framework and the Preemption Fallacy

The sincerity of OpenAI's call for harmonization and alignment with federal standards is questionable when analyzed against the backdrop of federal inaction. I agree with the large AI labs that a consistent, robust federal framework for AI safety and transparency is undeniably preferable to a patchwork of state laws. However, the comprehensive federal framework that OpenAI suggests should preempt state law does not currently exist.

California, and other states, are stepping in precisely because of this federal vacuum. If the goal were truly a coherent regulatory landscape centered on federal leadership, OpenAI should be loudly and publicly advocating for a federal AI safety and transparency law that incorporates the key accountability mechanisms present in SB 53: mandatory public disclosure of safety protocols, critical incident reporting, and robust whistleblower protections.

To date, OpenAI has not laid out a coherent set of principles for such US federal legislation, nor have they directed significant lobbying resources toward passing a federal safety bill. Instead, OpenAI is enacting Lehane’s Fairshake crypto playbook with aligned interests, including their co-founder Greg Brockman, pledging over 100 million dollars to fight state regulations. While competitors like Anthropic have called for federal oversight mechanisms, a unified industry push for binding federal regulation has been notably absent.

This inconsistency undermines the argument for federal preemption, where federal law supersedes state law. Preemption is only justifiable when the federal standard meets or exceeds the protections offered by the states. Advocating for preemption based on the current landscape of voluntary commitments and specialized evaluations is simply an attempt to escape regulation altogether.

Furthermore, it is in the long-term interest of AI labs to support reasonable safety measures now. As policy experts, including Anthropic’s Jack Clark, have emphasized in Congressional testimony, "In the absence of a federal framework, I worry that we are just creating a vacuum in which … should there be an accident or misuse, into that vacuum will flood in really really extreme over-regulation that could damage this industry."2 The pragmatic reality is that proactive, well-designed regulation today is far superior to the rushed, reactionary, and potentially draconian policies that will inevitably follow a significant AI-driven catastrophe. By resisting light-touch transparency measures now, the industry increases the risk of innovation-stifling regulation tomorrow.

3. The False Equivalence of the EU Code of Practice

P3: "We became the first US AI company to announce our intent to sign the EU AI Act CoP. The CoP requires signatories to uphold principles of transparency, safety, and fairness..."

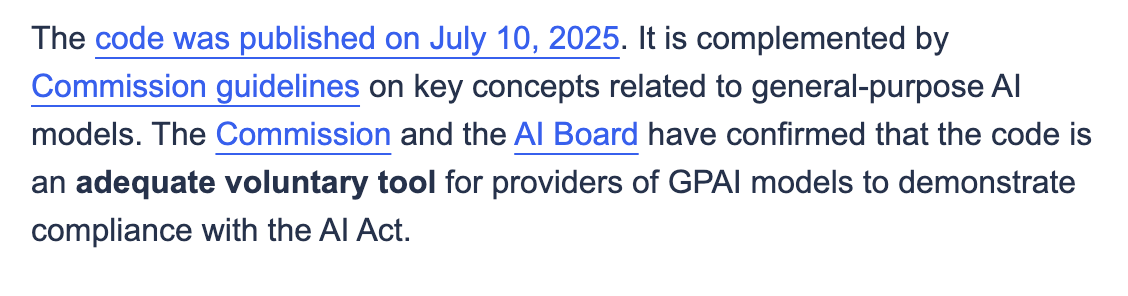

Lehane presents the EU General-Purpose AI (GPAI) Code of Practice (CoP) as a rigorous standard sufficient for state compliance. This is misleading on two key fronts.

First, the EU CoP is explicitly voluntary. It is a tool designed to help providers demonstrate compliance with the actual law, the EU AI Act. It is not the law itself. If California were to grant a safe harbor based on the CoP, it would be granting legal immunity based on a voluntary commitment, leaving the state with fewer enforcement levers than the European Union chose to retain.

Second, the CoP is significantly weaker on actual transparency than proposals like SB 53. While the CoP includes a "transparency" section, it largely focuses on providing information to regulators and customers upon request, with public disclosure treated as optional. SB 53, conversely, mandates the public disclosure of safety and security protocols. Accepting the CoP as a substitute would sacrifice this crucial element of democratic accountability.

4. The Limits of Federal Oversight (CAISI)

P4: "OpenAI committed to work with the US federal government and its Center for AI Standards and Innovation (CAISI) to conduct evaluations of frontier models’ national-security-related capabilities."

The letter misleadingly (again) treats voluntary engagement with CAISI as equivalent to comprehensive regulatory compliance, or even compliance with the EU CoP. CAISI (housed within NIST) agreements often amount to bespoke, voluntary arrangements to conduct specific tests with a government agency. They are fundamentally different from the binding requirements of proposed state laws like SB 53. This is not the first time OpenAI has tried to argue for voluntary commitments in exchange for preemption (see OSTP submission below).

Furthermore, CAISI's mandate is specific and limited. As emphasized in the White House AI Action Plan, its focus is heavily tilted toward national security. This mission is distinct from California’s broader governance interests.

Federal evaluations conducted by CAISI may involve classified information, need not be public, and are not enforceable under California state law. This situation demands layered governance, not federal preemption. The state must maintain the authority to enforce transparency, incident reporting, and accountability for risks impacting Californians locally.

5. The Obsolete Argument: Burdens and Startups

P6: "We also encourage you to support smaller developers by ensuring that any new regulatory regime does not impose undue burdens on startups and open-source developers. A liability regime that stifles innovation would only serve to entrench large, incumbent companies..."

Even if I take issue with calling a $100 million dollar developer small, this argument regarding regulatory burden and the impact on startups is now largely irrelevant in the context of SB 53. The August 29 amendments by the Assembly Appropriations Committee directly addressed these concerns:

The threshold for a "covered developer" was raised from $100 million to $500 million.

The requirement for mandatory third-party safety audits, often cited as the most burdensome element, was removed.

SB 53, as amended, explicitly targets only the largest, most well-resourced frontier AI labs. The remaining requirements, publicly disclosing safety protocols and reporting critical safety incidents, are proportionate and essential. Given how non-burdensome the amended bill is, any vague or implied threats by industry players that such legislation would cause them to slow down product deployment or pull out of California are simply not plausible.

6. The "CEQA for AI" Fallacy

P7: "We don’t want to create a ‘CEQA for AI innovation.’ CEQA... has been notoriously abused to block or delay critical infrastructure projects..."

Lehane invokes the California Environmental Quality Act (CEQA), known for lengthy project-by-project environmental reviews and litigation hooks. This comparison is rhetorical hyperbole, not substantive analysis, and a shallow ploy at trying to frame SB 53 as somehow counter to the YIMBY “Abundance” movement.

Legislation like SB 53, particularly in its amended form, bears no resemblance to CEQA. There is no environmental-impact-statement-style process, no project-specific injunction mechanism, and no longer a third-party audit requirement. The analogy is a distraction. A more accurate analogy is California’s existing privacy regime under the CPPA, which requires reasonable risk assessments and cybersecurity audits without freezing technological development."

7. State Capacity and Responsibility

P8: "National frameworks such as CAISI or CoP have the capacity for safety reviews a state simply cannot do. For example, evaluating the national security risks of frontier models requires access to classified information..."

This is partially true but misleading. It is true that California should not independently run red-teaming evaluations against classified biological threats or sensitive intelligence capabilities.

However, it is false that the state lacks the capacity for its core governance functions. California absolutely has the capacity, and the responsibility, to mandate public-facing safety protocols, require reporting of critical incidents (such as loss of model control or evidence of assistance in creating CBRN weapons), and enforce whistleblower protections to surface risks early.

8. The Economic and Geopolitical Context

P1: "...the AI sector is already driving tremendous innovation in our state’s economy while adding billions of dollars of revenue to the budget..."

P9: "Aligning with global standards also sends a powerful message about the importance of democratic AI governance... as opposed to autocratic AI."

The letter attempts to leverage both economic impact and geopolitical concerns to argue against state-level regulation.

The economic argument implies that the prospect of revenue justifies outsourcing the state’s safety regime. Economic benefit does not negate the need for oversight, especially when capability gains and systemic risks are rising in parallel. As noted above, the idea that current legislative proposals pose a significant economic burden is implausible.

The geopolitical argument suggests that strong state regulation undermines a united democratic front. On the contrary, the concept of states as "Laboratories of Democracy" is crucial here. Historically, California's leadership (the "California Effect") has often driven national and global standards (e.g., emissions, privacy). By implementing robust transparency mechanisms, California reinforces democratic accountability, rather than undermining it.

9. The Non-Profit Misdirection

P10: "Since OpenAI is a non-profit dedicated to building AI that benefits all of humanity, we are committed to working with you..."

This description of OpenAI's structure and mission is imprecise. Structurally, OpenAI operates through a complex hybrid model where a non-profit board oversees a for-profit subsidiary (which is attempting a transition to a Public Benefit Corporation). The entity developing and commercializing the frontier models operates with commercial incentives.

Furthermore, the letter slightly misstates the mission. OpenAI’s stated mission is to ensure that Artificial General Intelligence (AGI) benefits all of humanity. This precision matters, particularly amid ongoing public debates and legal scrutiny regarding the organization's fidelity to its founding principles and potential "mission creep."

Regardless of the corporate structure or the nobility of the mission, good governance requires independent verification and accountability. A company's tax status is not a substitute for enforceable safety regulations.

Conclusion: A True "California Approach"

Lehane concludes by urging the Governor to:

P11: “Be the first to sign onto a ‘California Approach’ that reinforces those standards.”

The "California Approach" proposed in the letter is hollow; it is merely an attempt to treat different regulatory frameworks as interchangeable to avoid accountability.

A genuine "California Approach" must preserve the state's authority and adhere to the "trust but verify" principle, leveraging the superior public transparency mandated by legislation like SB 53. It should also serve as a catalyst for the robust federal action that the industry claims to support.

Harmonization is good governance; substitution is regulatory capture. California must maintain its ability to verify industry claims and enforce transparency. Then if California’s standards pressure the federal government and the industry to adopt a robust national framework, it can achieve the goal of harmonization through strength, not deference.

This whole article is great for an understanding the man OpenAI decided to lead their global affairs.

https://www.newyorker.com/magazine/2024/10/14/silicon-valley-the-new-lobbying-monster

Quote is hand transcribed from the video and may contain errors.